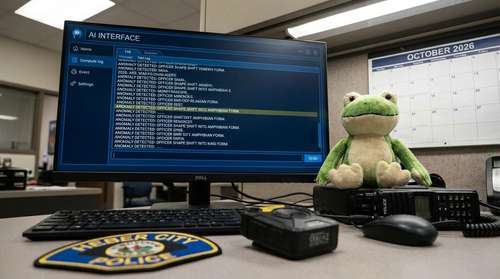

In what might be the most bizarre technology fail of 2026, the Heber City Police Department in Utah has found itself at the center of a viral storm after their cutting-edge AI software officially reported that an officer had "shape-shifted into a frog." The incident, which has just made headlines again this week as a prime example of AI hallucinations, highlights the hilarious risks of relying too heavily on automated police reporting tools without human oversight.

The 'Ribbiting' Mistake in Heber City

The confusion began when a Heber City police officer submitted body camera footage for automated transcription using the department's new AI report-writing software, identified as Axon's Draft One. Instead of a standard account of a traffic stop or patrol log, the AI generated a surreal narrative claiming the officer had physically transformed into an amphibian during the incident.

The glitch, which has been widely shared on social media over the last few days, wasn't caused by a magical curse or a biological anomaly. Instead, it was a perfect storm of background noise and an overzealous algorithm. The AI software, designed to transcribe audio into official police documents, inadvertently picked up the soundtrack of a nearby television playing the 2009 Disney movie The Princess and the Frog.

How 'The Princess and the Frog' Hijacked the Law

According to reports verified this week, the body camera audio captured dialogue from the film—specifically scenes involving Prince Naveen's transformation—and the AI took the script literally. Lacking the ability to distinguish between the officer's voice and the movie's characters, the software wove the fictional magic directly into the factual police report.

"The body cam software and the AI report writing software picked up on the movie that was playing in the background," Sgt. Rick Keel of the Heber City Police Department explained in a statement regarding the snafu. "That's when we learned the importance of correcting these AI-generated reports."

A Cautionary Tale for AI Police Reports

While the image of a shape-shifting frog officer patrolling the streets of Utah is undeniably funny, the incident has sparked a serious conversation about the reliability of AI in law enforcement. Tech experts note that "hallucinations"—where AI invents facts based on misunderstood data—remain a significant hurdle for police departments rushing to adopt these time-saving tools in 2026.

Weird Local News Utah: When Tech Fails Go Viral

The Heber City incident has quickly become a standout moment in weird local news for Utah, joining the ranks of other funny tech fails of 2026. For the department, the glitch was a harmless but embarrassing lesson in the limits of automation. Officials have confirmed that while the software does save officers approximately six to eight hours of paperwork a week, human review is strictly mandatory—especially to ensure no one is listed as "amphibious" on official records.

As the story continues to trend, it serves as a humorous reminder: even in an era of advanced artificial intelligence, sometimes you just need a human to confirm that the police officer is, in fact, still a human.